AWS Systems Manager parameter store is a well-known key-value store where the developers store the parameters in a secure manner. You can be able to store the parameters as normal strings and also as secured strings in the parameter store which can be accessible via API or CLI. You can reference the parameter store name in the scripts, SSM documents, and also in the Codebuild build spec.

Requirements.

- AWS CLI profile configured.

- Access to the Parameter store and S3 in your AWS account.

- python3 and boto3 should be installed on your machine.

Scenario

We all know we will manage our AWS RDS DB credentials in the parameter store and sometimes we need to change these values at the DB level. After changing this in DB level we need to update this in the parameter store. But there can be chances of errors in this process which is carried out by humans. So for critical systems and for major applications we always choose to take a backup of the parameters so that if anything goes wrong we can revert it using the parameter backup that we have.\

Solution

As a solution for this scenario, we can write these parameters’ existing values to a txt file. To automate this I wrote a Python script which is given below.

import boto3

session = boto3.Session(region_name="<Region>" , profile_name="{profile_name}")

client = session.client("ssm")

s3 = session.client('s3')

#if the parameter store value is /RDS/CLUSTER/CHAT_DBNAME enter /RDS/CLUSTER/ as "path" and CHAT as "prefix"

path = input("Please enter the common filter : ")

prefix = input("Please enter the prefix after the filter : ")

paginator = client.get_paginator('get_parameters_by_path')

file_name = f"ssm-backup-{prefix}.txt"

file = open(file_name , "w")

response = paginator.paginate(

Path = path,

Recursive = True

)

for param in response:

for entry in param["Parameters"]:

name = entry["Name"]

value = entry["Value"]

if name.startswith(path+prefix):

file.write(f"{name} = {value} \n")

file.close()

consent = input("Do you want to upload the backup file to S3? (yes/no)").strip().lower()

if consent == "yes":

bucket = input("Do you have a bucket to upload this file to ? (yes/no)").strip().lower()

if bucket == "yes":

bucket_name = input("Please enter the name of the existing bucket : ")

s3.upload_file(file_name , bucket_name , file_name)

print(f"The file {file_name} has been uploaded to the bucket {bucket_name}")

elif bucket == "no":

bucket_name = input("Please enter the name of the bucket you need to create : ")

try:

s3.create_bucket(

Bucket= bucket_name,

CreateBucketConfiguration = {'LocationConstraint': '<Region>'}

)

print(f"Bucket with name {bucket_name} has been created succesfully")

s3.upload_file(file_name , bucket_name , file_name)

print(f"The file {file_name} has been uploaded to {bucket_name}")

except Exception as e:

print(f"failed to create a bucket with name {bucket_name} : {str(e)}")

else:

print("Please enter a valid input (yes/no)")

elif consent == "no":

exit

else:

print("Please enter a valid input (yes/no)")This Python script uses Boto3 and SSM client to take backup based on the path and prefix.

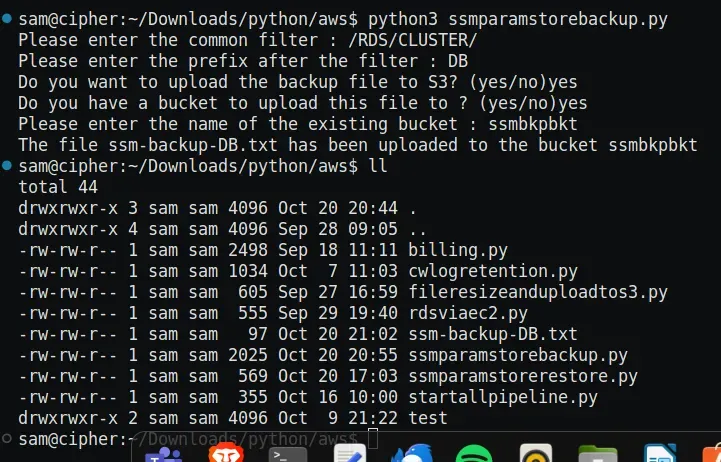

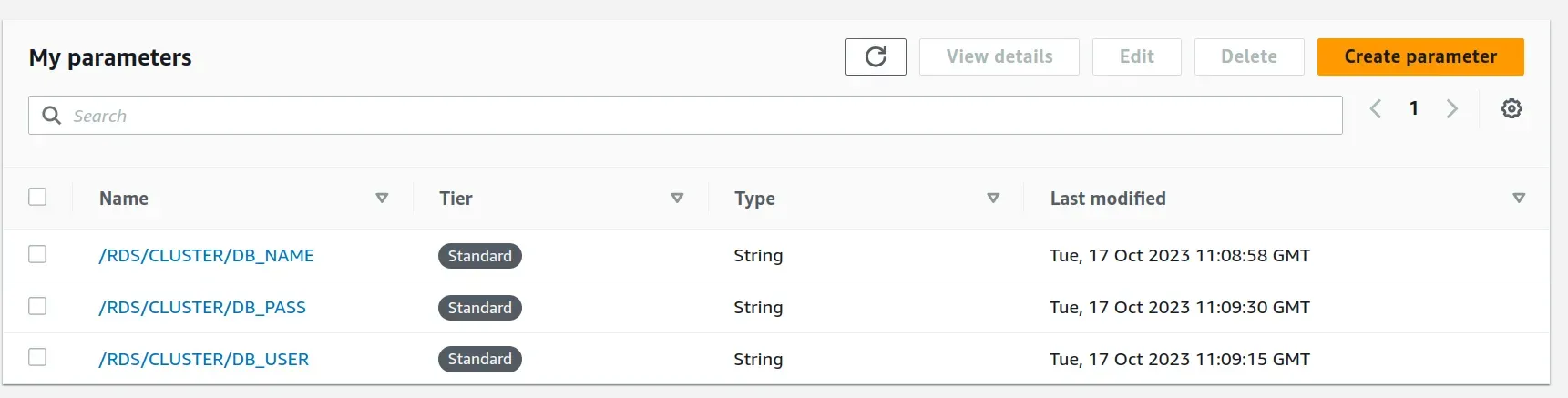

For example, if we need to take the backup of all the parameters with the prefix /RDS/CLUSTER/DB we can give the filter as /RDS/CLUSTER/ and give the path as DB. Now the script will take a backup of the values like /RDS/CLUSTER/DB_NAME, /RDS/CLUSTER/DB_USER, etc., and then upload the file to S3 based on the interest by the USER.

This will be written to a file named ssm-backup-DB.txt in the format of KEY = VALUE.

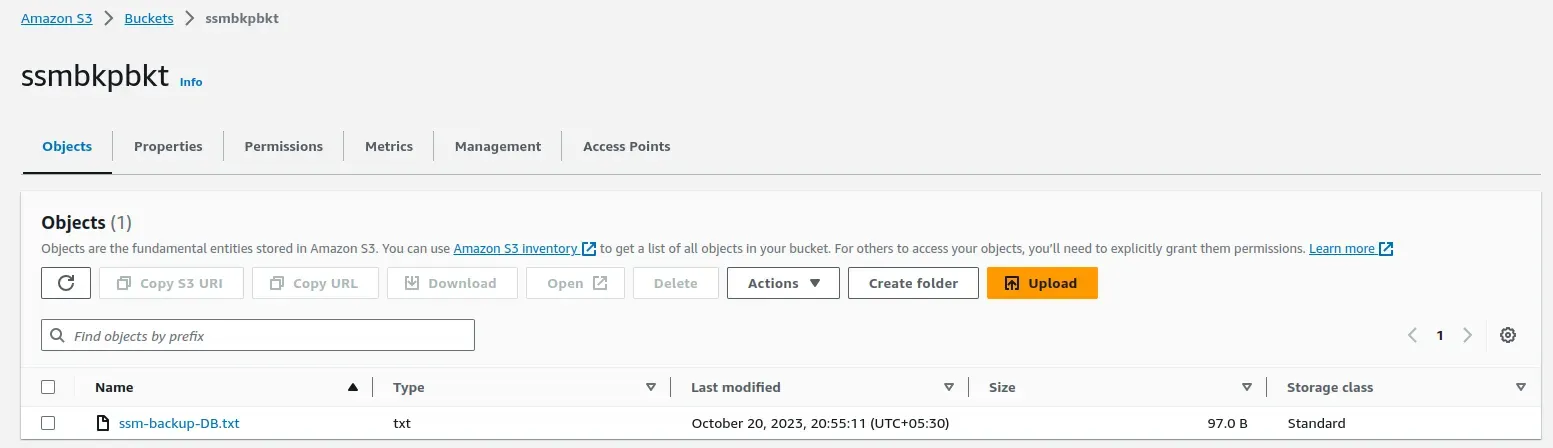

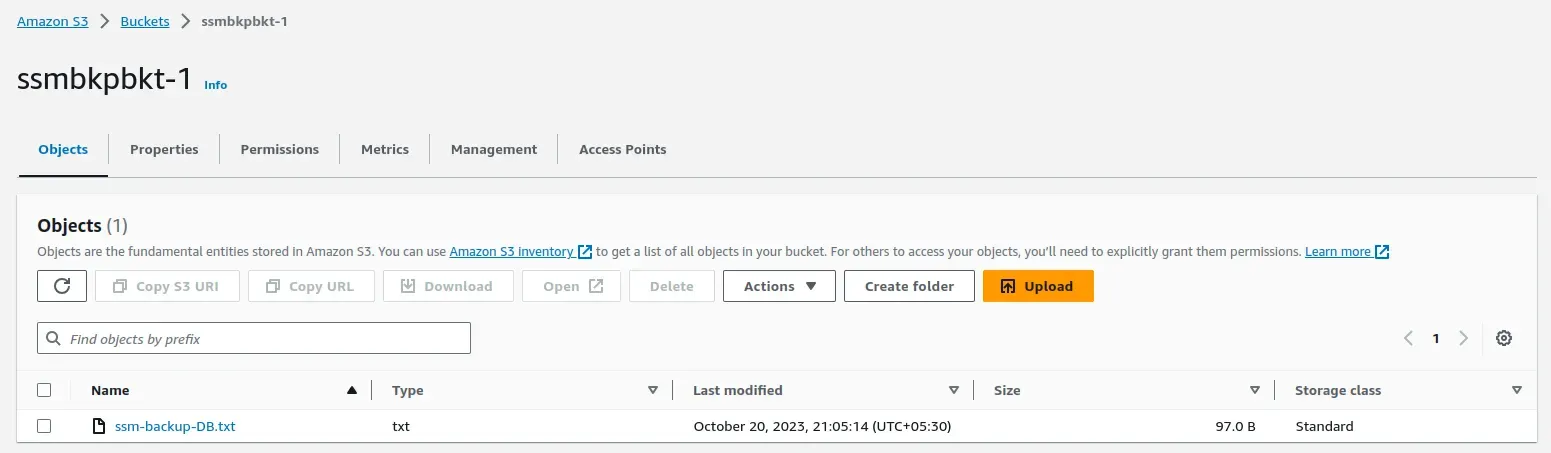

As you can see in the above screenshot the script asked for the common filter to the user and also asked for the prefix(Path). After the script execution, the file with the name ssm-backup-DB.txt is created, and the contents in the file are visible below. If you enter the path/prefix as CONFIG the the file name will be ssm-backup-CONFIG.txt. Also, the file has been uploaded to the specified S3 bucket.

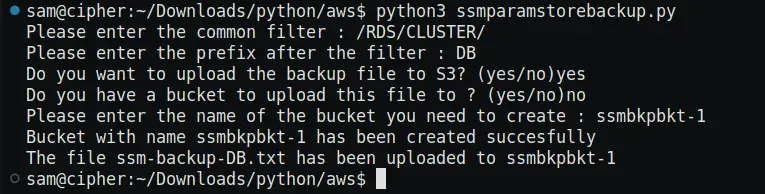

If you don't have an S3 bucket you can go for option no and the script will prompt for the creation of a new S3 bucket.

You can see from the above screenshot that a new bucket has been created and the file has been uploaded to the S3 bucket.

These are the test parameters that I created for testing the script.

Now we have successfully generated backups of our parameter store. But what if something goes wrong and we need to restore these backup files?

You can follow this URL (https://medium.com/supportsages/restoring-parameter-store-backup-automatically-using-python-fe5b3e0d0811) to get this done.

That’s all thank you.

Ready to fortify your AWS systems against unforeseen data mishaps? Access our step-by-step guide and Python script for effortless restoration of Parameter Store backups. Gain expert insights and master automated restoration for your AWS Parameter Store backups in just a few simple steps! Discover more at SupportSages to safeguard your AWS Parameter Store backups effortlessly!